Vision & Overview

I haven't filled this out loloops

I am a principal scientist at Microsoft and a researcher (PGR) at the University of York. I work in projects related to fundamental problems in AI and science, such as cognition, measurement, and social impact.

By building a perceptron in AoE II, I show research assuming or concluding LLM anthropomorphism is flawed.

PDF | Gates | BibTexInvited talk at i3D 2026 on my paper Will GPT-4 Run DOOM?.

Slides (auto-downloads)With Wei Li. We show that improving dialectal performance in LLMs requires more powerful algorithmic techniques than simply stats.

PDF | Code | BibTexWith Tangming Yuan. We pose that LLMs have shallow, but not entirely void, understanding.

PDF | Code | Bibtex | PressWith Anubhav Jangra, Bahareh Sarrafzadeh, Silviu Cucerzan, and Sujay Kumar Jauhar (co-PI). We find that personalisation measurements are highly unreliable between metrics and approaches.

PDF | BibtexIt is, but very weak and brittle. Chosen by the Turing Post as one of 23 most influential papers in 2025.

PDF | Code | Bibtex | PressExcited to have been named a Thinking about Thinking fellow for 2026!

Read moreInvited talk at MBZUAI's research showcase (2025)

SlidesAdrian de Wynter is a principal scientist at Microsoft and a researcher (PGR) at the University of York. His main work is related to fundamental problems in AI and science, such as cognition (e.g., understanding, reasoning) in machines, measurement (in science, like evals), and social impact.

His primary research interest is the interplay of intelligence, cognition, and reasoning between humans and machines. This touches upon foundational aspects of AI, such as the question of whether showcasing understanding is required for 'good' dialogue (it's not); or whether LLMs are able to learn, as opposed to relying on their intrinsic knowledge (they can). He also posed that some assumptions in LLM research regarding anthropomorphism are flawed, by showing that one could build an LLM anywhere (e.g., the Greater Boston Area or Age of Empires II), and retain some of its properties, but not its human-like attributes. He also studied the ability of LLMs/agents to interact with their environment (by using DOOM!), showing they lack spatial reasoning skills and object permanence.

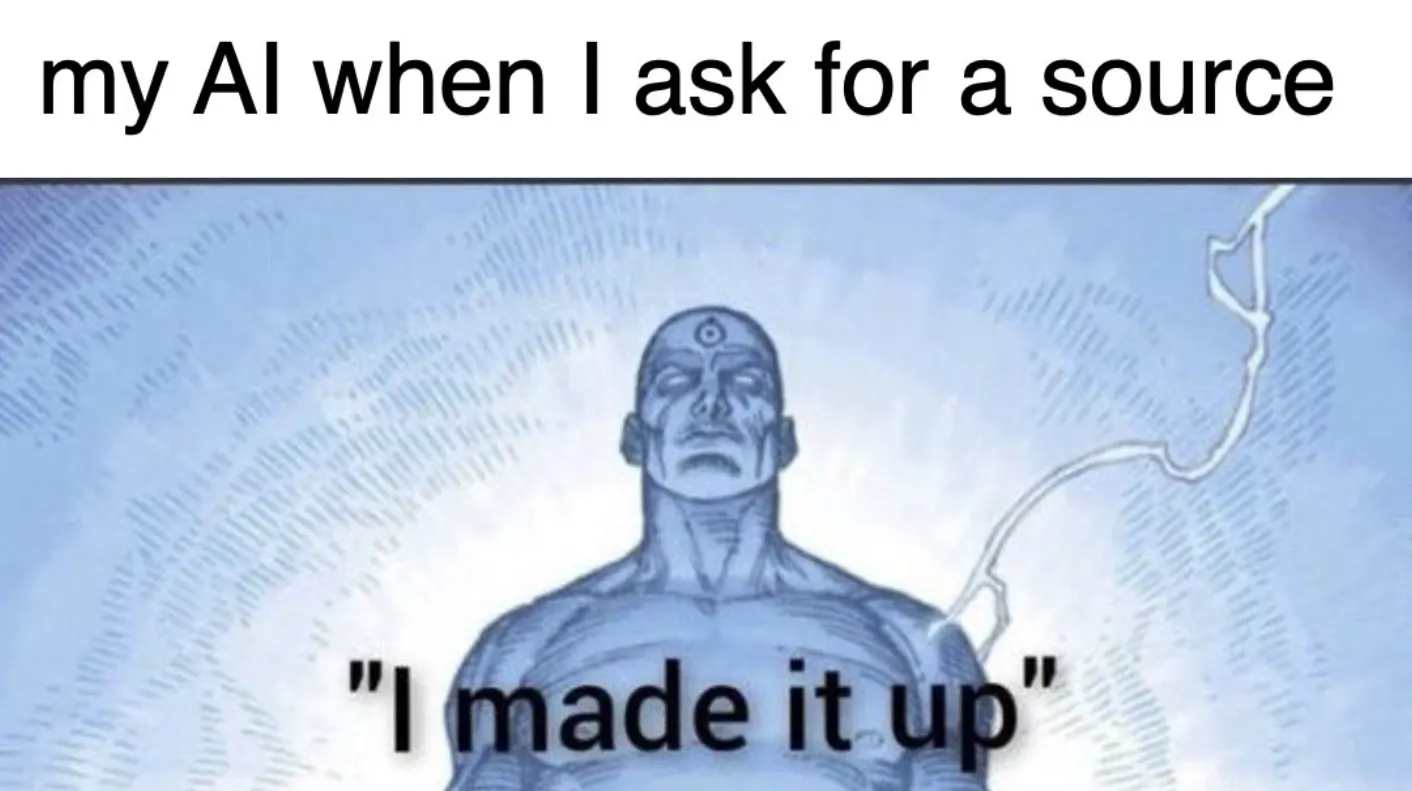

Measurement-wise, he developed an algorithm with cryptographic guarantees for determining trust in evaluators (e.g., LLMs-as-judges). He's also done some meta-science work, such as research on LLM research (e.g., LLMs), and another neat paper (in his opinion) coming soon. Earlier theoretical work showed with category theory that some prompting strategies are (formally) better than others, and how (and when) LLM-based data augmentation works.

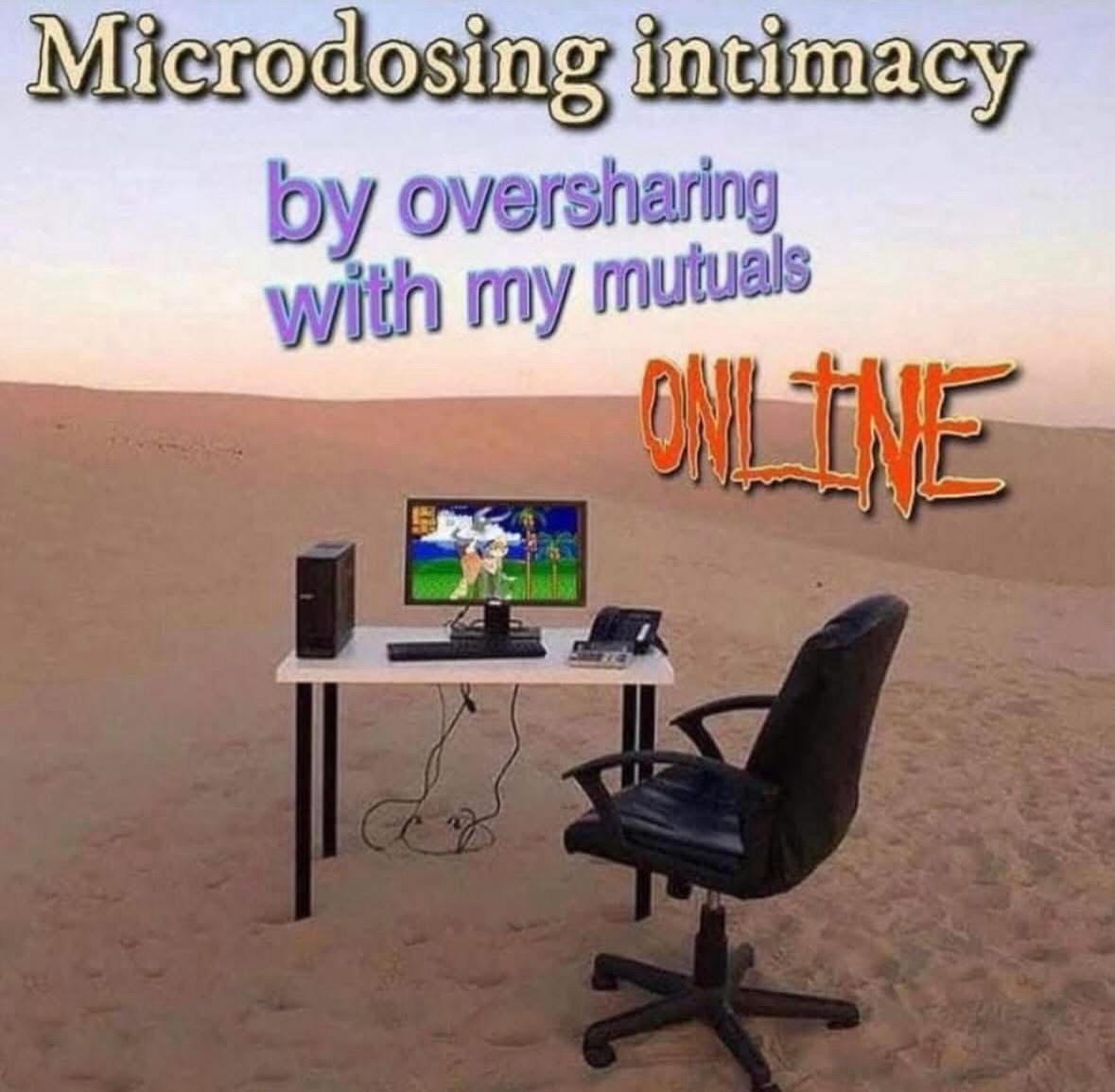

His other research interests include recreational mathematics (games), preserving endangered languages, and computational social science. In the latter he has worked on mitigating toxicity, unfairness, and other harms of LLMs; personalisation and sycophancy in LLMs; and carried out one of the first studies of the impact of ChatGPT on loneliness. He also has stuff in SIGBOVIK 2025 and 2026.

In terms of service, he has--like everyone and their grandma--reviewed for AAAI, *ACL, NeurIPS, ICML, ICLR and so on (and was named a gold reviewer, which honestly is really nice). He also reviews for Nature (AI & Ethics; Artificial Intelligence, and Communications), IEEE Transactions on Games, and ACM TIST. He was recently named a Thinking About Thinking fellow.

In his spare time, Adrian enjoys photography, cooking, type 2 fun, and divulging personal information to strangers on the internet.

I haven't filled this out loloops

A condensed summary of my paper, with some clarifications. And, sadly, the tacky AI-generated image because people won't read this otherwise.

Read →

Yes but no. Links to code, resources, TL;DR of the paper, and videos of the model playing the game.

Read →

This is the TL;DR of my paper Algorithmically Establishing Trust in Evaluators.

Read →

This is a less rigorous version of my paper Turing Completeness and Sid Meier’s Civilization, published in IEEE’s Transactions on Games.

Read →

Some random stuff that I didn't know where to put

Read →